Unfolding

UNFOLDING

TEAM

with Yuran Ding, Jenna Kim, Taery Kim and Nandini Nair

ROLE

Concept Development, Programming, Prototyping

PROJECT DURATION

5 weeks

Tools

p5.js, Teachable Machine, Zoom, OBS Camera, Illustrator

Background

We were asked to design a system for digital signage that could respond to dynamic contexts based on specified conditionals and events. Particularly using the Zoom software and Google’s Teachable Machine model as main tools.

For this, the team and I thought of the current pandemic situation and how it has impacted all interactions, including leisure time, when now all we do is stare at our screens 130% more than before. So we thought we could use this situation as an opportunity to take care of ourselves in an interactive way.

Given that loneliness among young people and university during the pandemic has become a major concern, and that online platforms for virtual meetings like Zoom have limitations in the exchange of emotions and social communication.

How can we care for one another, across virtual platforms?

Outcome

Unfolding

Unfolding is an experience that integrates paper-folding and self-reflection through a meditative/reflective exercise involving journaling, via a new and interactive Zoom space. This in order to facilitate a caring, therapeutic environment via a multi-modal experience to combat fatigue, loneliness, and emotional distancing on Zoom.

A paper-folding activity that helps you unfold your emotions.

HOW it works

Unfolding is an experience that uses Zoom as the main platform for the participants to engage in emotions-level communication.

So, as you saw during the video, it all starts with an instructor that gives the instructions of getting origami paper of multiple colors, particularly the ones related to the emotions wheel.

While each of the users follows the steps to create the origami, every step of folding has a question that allows for the user to unfold their emotions through journaling and is given by the instructor at every step.

Steps to fold an origami fox - along with the journaling questions.

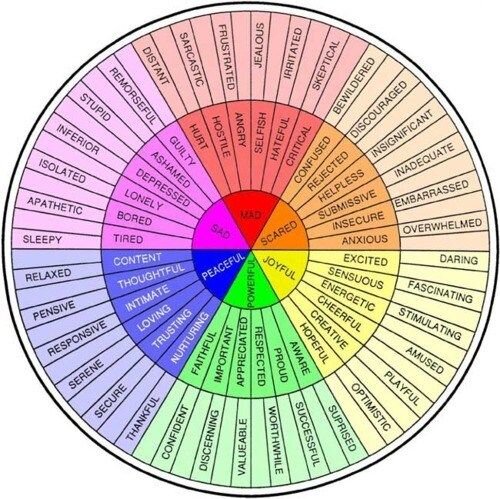

The emotion wheel is based on 6 basic emotions that are represented by a color and an animal/flower, the latter will be displayed every time the user holds the paper of the color related to that emotion, as shown below.

By the end of the session, the user should have a beautiful origami figure and a sequence of questions answered by their mood during the time this was happening, once the users hold up their final origami, the Zoom window will display the moods (animals/flowers) during shown during the session by that user and a sound orchestra in sync with the other users.

The self-reflective component of the journaling while doing the origami enhances the experience of assessing our thoughts. When we describe what is going on, and observe how this influences the outcomes we get in life, it becomes possible to gain more control over the emotional blind spots and helps us stay focused on our goals. So, is a very simple and fun activity that has deep emotional purposes.

Design Process

01

Understanding the problem

For this assignment, we were asked to create a system where digital signage could respond to dynamic contexts based on specified conditionals and events. This was limited to the tools that we had to work with: Zoom and Teachable Machine.

ZOOM

We already know Zoom pretty well by this point, we engaged with it more than any other tool during the pandemic due to the remote classes given by this tool. So we knew the basic interactions it had, video, chat, sound, screen share, emojis, raise the hand, yes/no buttons, remote control of the shared screen, among others.

But the most important feature to consider here was the video and sound. So once we knew that those were the core interactions to play with, we wanted to focus on that.

Photo by Gabriel Benois on Unsplash

Teachable Machine

Google’s Teachable Machine is a web-based tool that makes creating machine learning models fast, easy, and accessible to everyone. This interface is really easy to use, the user trains a computer to recognize images, sounds, and poses without writing any machine learning code. Then, use the model in projects, sites, apps, and other things.

But as mentioned before, it can classify images, sounds, and poses. Now, the one we cared more about was the image classification. Due to the video feature in zoom being fundamental for the project.

Once we understood the tools and fundamental features we had to work with, we started with the concept development.

02

Concept Development

For this stage, we started to think about opportunities that could leverage both tools in the best way possible. We wanted to give our project a good purpose, not do it just because of the fun of it. So first we needed to evaluate certain criteria and based on those move forward with the brainstorming.

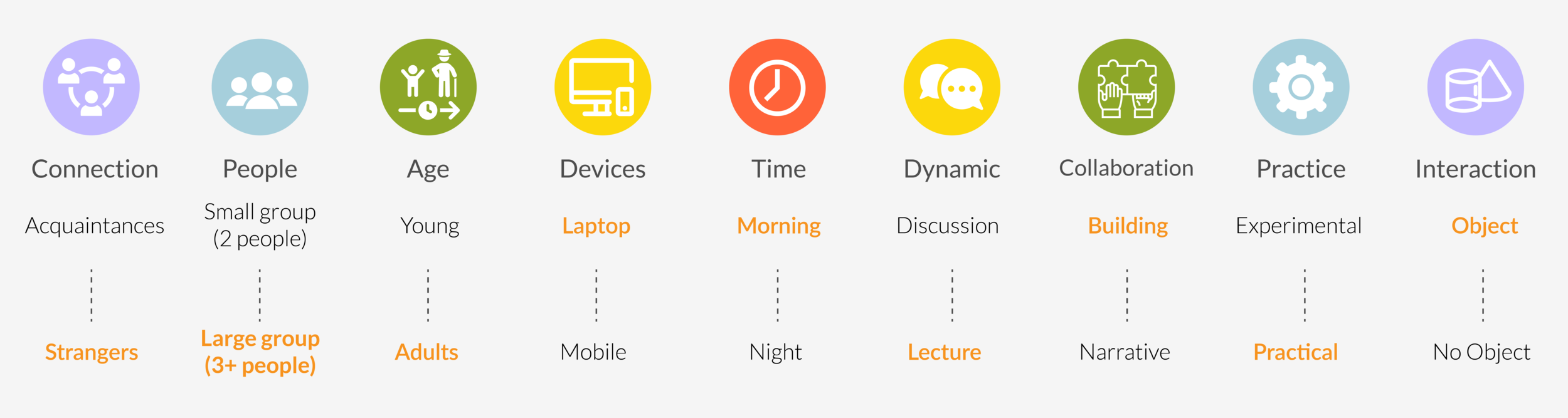

Once we voted on the spectrum sides of the criteria above (the selected ones are the orange bold ones), we could focus on what opportunities were available that followed all those guidelines.

During the time we were voting for the criteria we wanted for our project, one of the teammates found that loneliness was one of the most relevant themes during the pandemic, and so we focused on how to ameliorate this condition on people. At first, we thought about group yoga and allow for Teachable Machine to categorize certain poses to trigger audio or another prompt for the users, but we realized that these relaxing activities weren’t truly engaging with the video in the Zoom meeting, they are just following the instructor, and we wanted something more dynamic.

So, one of the teammates suggested origami, and how it can help to reduce stress and allows for meditation. So we decided to go for the origami activity.

03

Design Decisions

One of the biggest decisions we had to consider was the object we wanted to play with and classify with the Teachable Machine (TM) tool. When we confirmed that we wanted to go with the Origami activity, it allowed for multiple color papers to be classified and so allow for different interactions according to the color of the paper, also the precise folding could be seized with the TM classification algorithm.

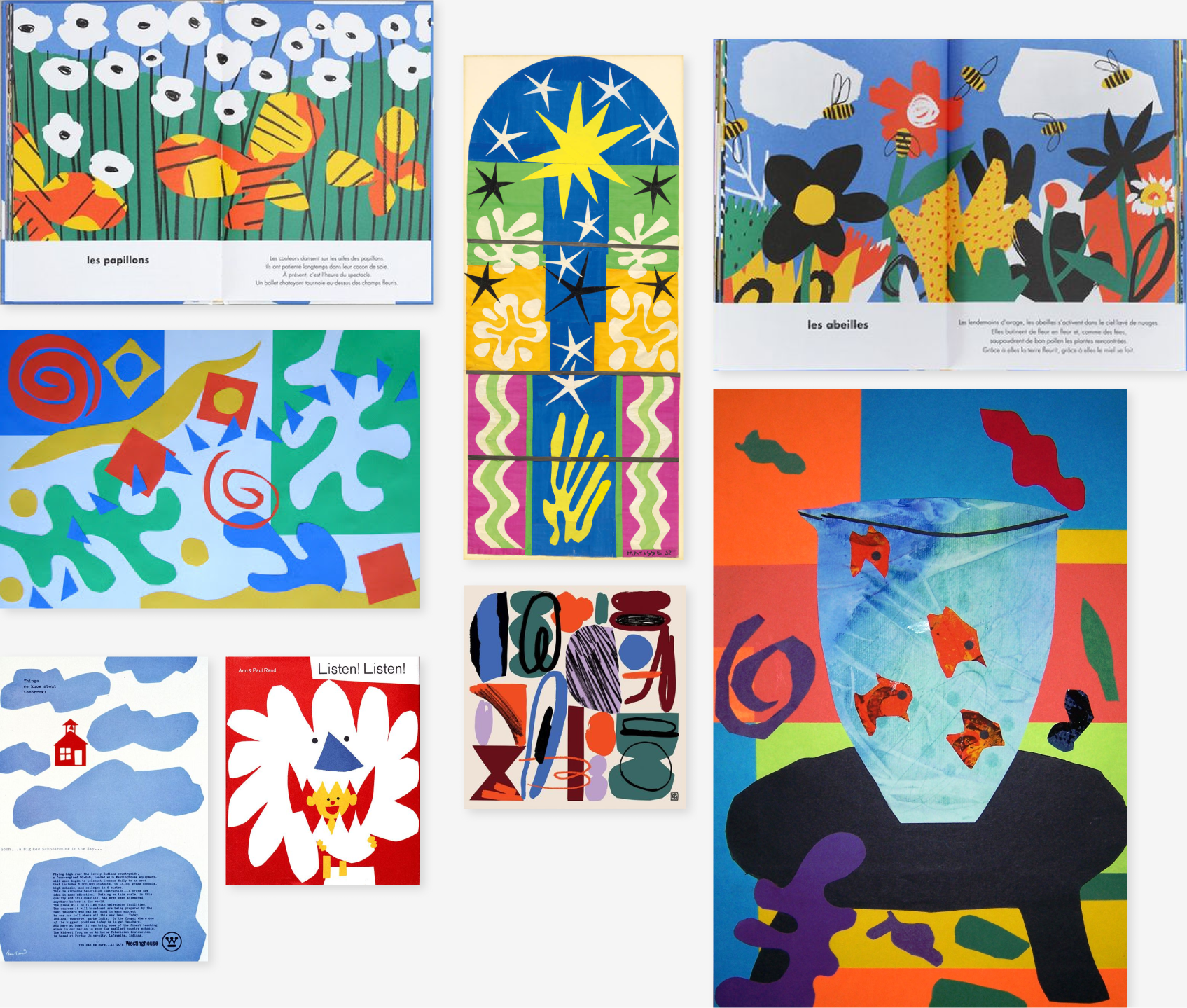

For the visual system, we went for a colorful palette that could represent the power and energy of each emotion. It was important for us to allow the users to feel safe in an intimate but open space with others, so we also decided on the paper-cut figures inspired by the art of Ann and Paul Rand.

Another major decision that we had to focus on was the colors used for the TM model training. We had to work with very distinctive colors for the model to differentiate between them. So, we used the emotion wheel we found for psychology treatments, and we went for the 6 basic colors and emotions.

Finally, the birds, bee, and flowers used for the project were done by 2 of my teammates and were beautifully crafted with the idea that we were going to cover different origami birds and flowers for this project.

04

Prototyping

I was in charge of the prototyping part, so before I asked my teammates to train their models, I had to train mine first to verify how the Teachable Machine model worked and how it was going to be used by them. Also, I took the responsibility for coding the p5.js sketch for the demo, which required many tries with lots of lines of code.

I did a lot of origami figures to understand the precision of the model to classify per color or per shape, and then I realized that since it classifies the pixels of the image given in the data, there had to be particular positions to make each folding of the same color distinct. I also realized through my mistakes that once the model was uploaded and the tab closed, you couldn’t update or improve the sample data. So I had to retrain a couple of models from scratch due to this error.

For most of the images, I had to put placeholders in the code until I got the final assets because there were a lot of changes for the final images to display during the session. The good thing was that it was minor changes in the code since the training model worked with general labels that could be connected to any asset.

All the code can be found here - p5.js sketch

Finally, while doing the demo with the OBS camera we had to follow “The Coding Train” tutorial to install it because although it was a hack, it was the best way to make it work live during the demo.

05

What did I learn?

Team collaboration

This was a particular and challenging project because it was the first I had to work with 4 other teammates, who were geographically distant and working on different timezones. So the schedule out of the class time was problematic, but we made it work even if we had to split tasks and meet once a week to discuss important matters. I realized that you don’t need to have all the team in idle states, but to assign relevant objectives to each of the team members and gather a few times to discuss the things that require the team’s decision.

Real Interactive interfaces

“It’s alive, alive!” - Henry Frankenstein

I took a personal take on this quote since nothing is more satisfying than experiencing a working code, especially if it is YOUR code. I truly enjoyed the discovery that involved playing with new tools like Teachable Machine and make it work with p5.js. And make it real until a certain point, of course, but still very palpable and genuine experiences of what can be done and how it will look. So, this gives me a lot of hope for more software that allows designers to play with real interfaces.